DEALta

Stateful Review Orchestration for Multi-Team Workflows

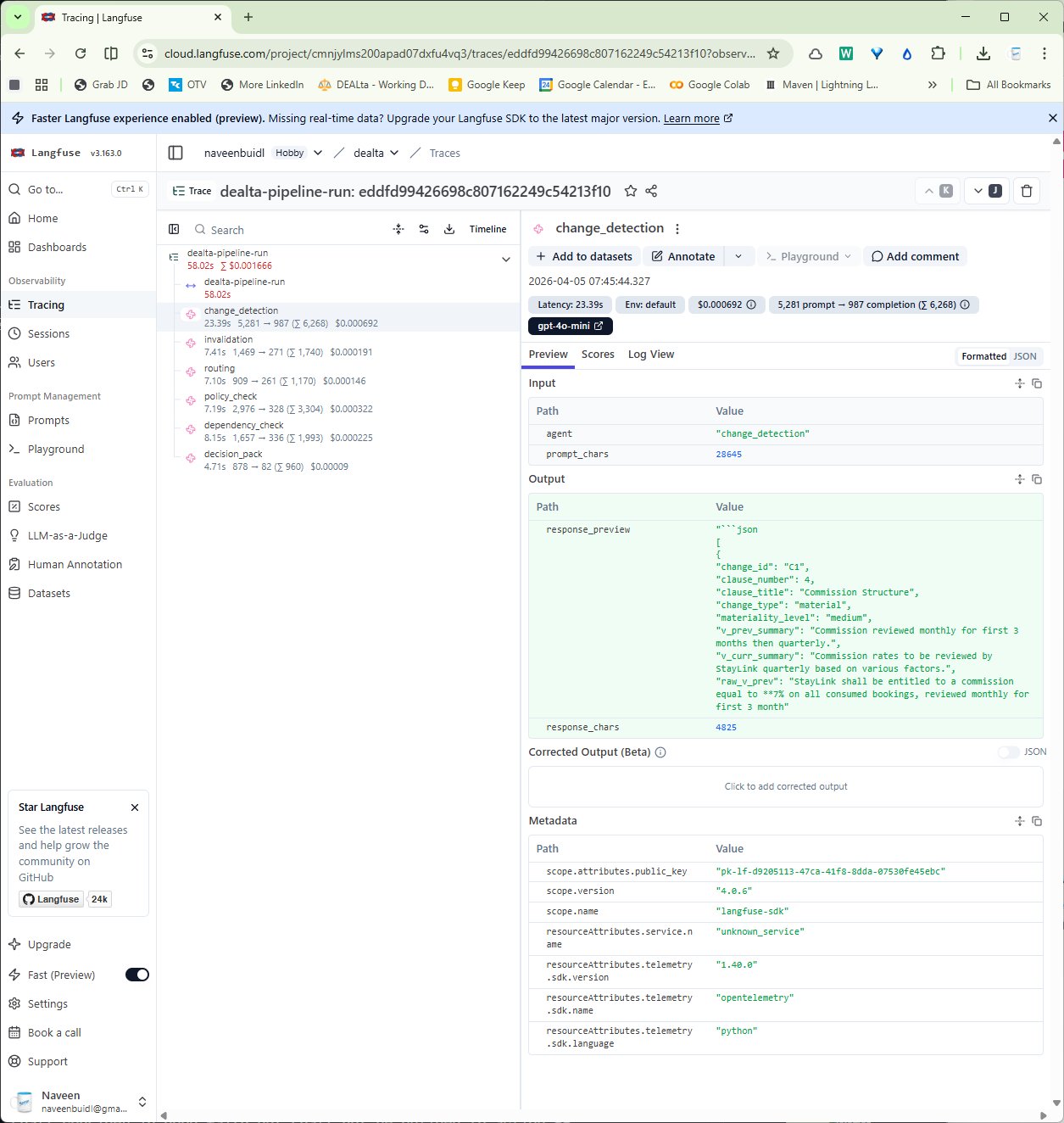

Detects what changed in a document, routes review tasks to the right teams, and tracks which prior approvals are no longer safe to trust after later revisions.

Stateful Across Rounds

Multi-Agent Orchestration

Eval-First

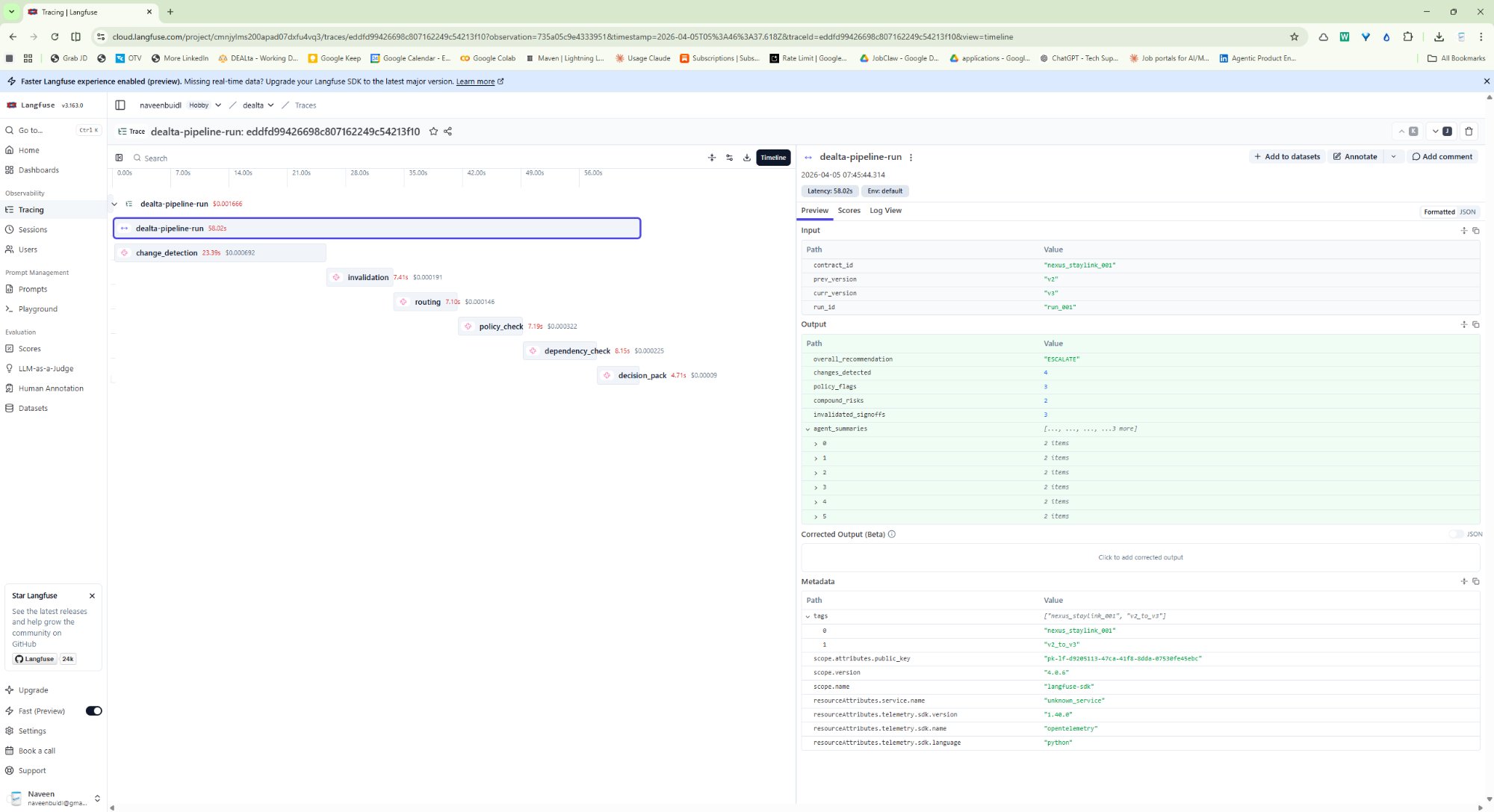

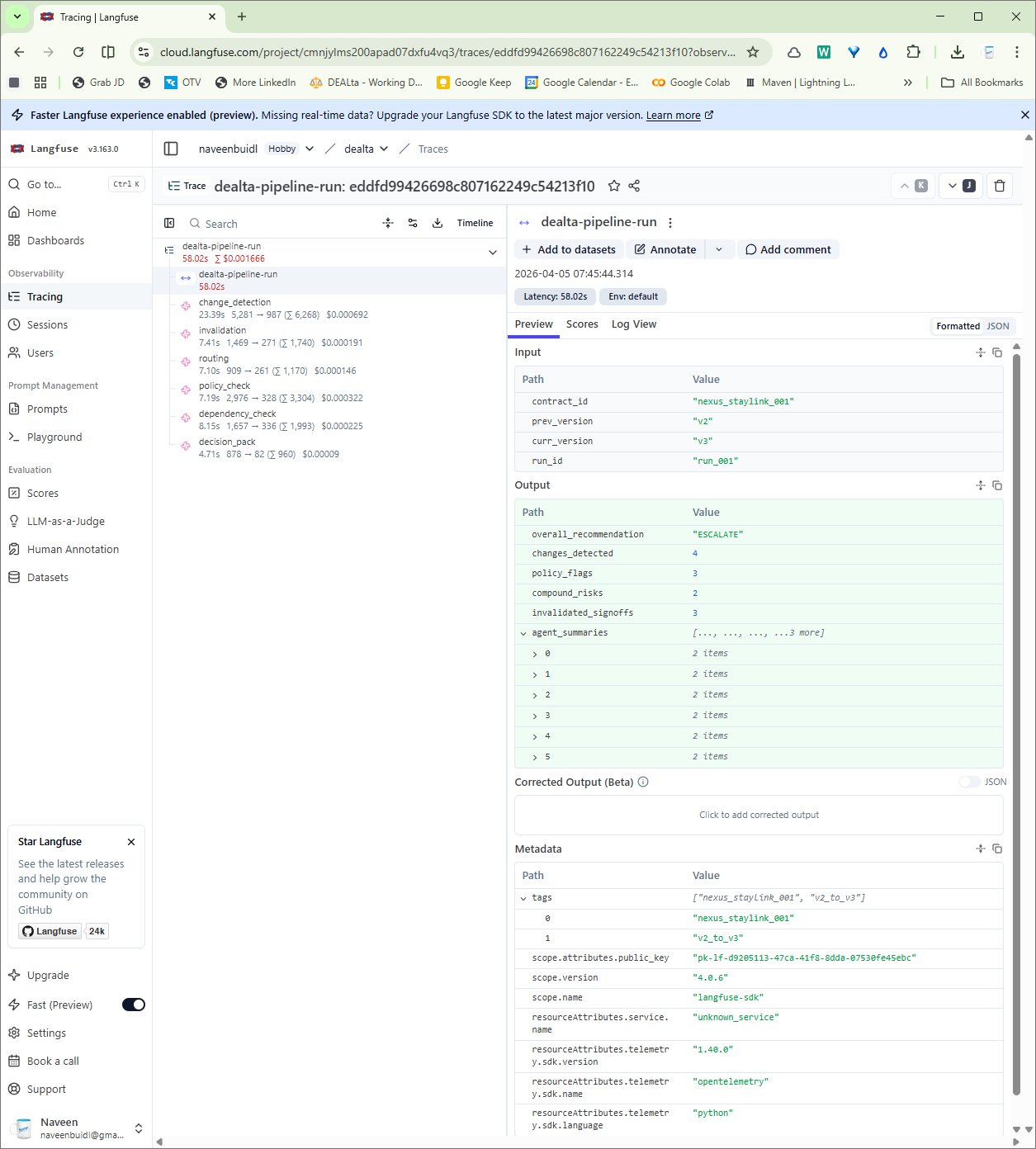

Langfuse Traced

Built from experience coordinating a supplier agreement across Legal, Finance, Commercial, Product/Tech, and Customer Support.

At a previous role, I coordinated a supplier agreement renegotiation across five business functions. By the third round, Finance had approved commission terms that v3 silently changed. Legal had signed off on liability terms that v3 made asymmetric. No single reviewer had visibility across all three broken approvals at once — and the contract was heading toward execution. The failure mode wasn't "nobody reviewed it." It was "everyone reviewed their part, and nobody saw the whole." DEALta is designed to catch that.